I tried to use Automerge again, and failed.

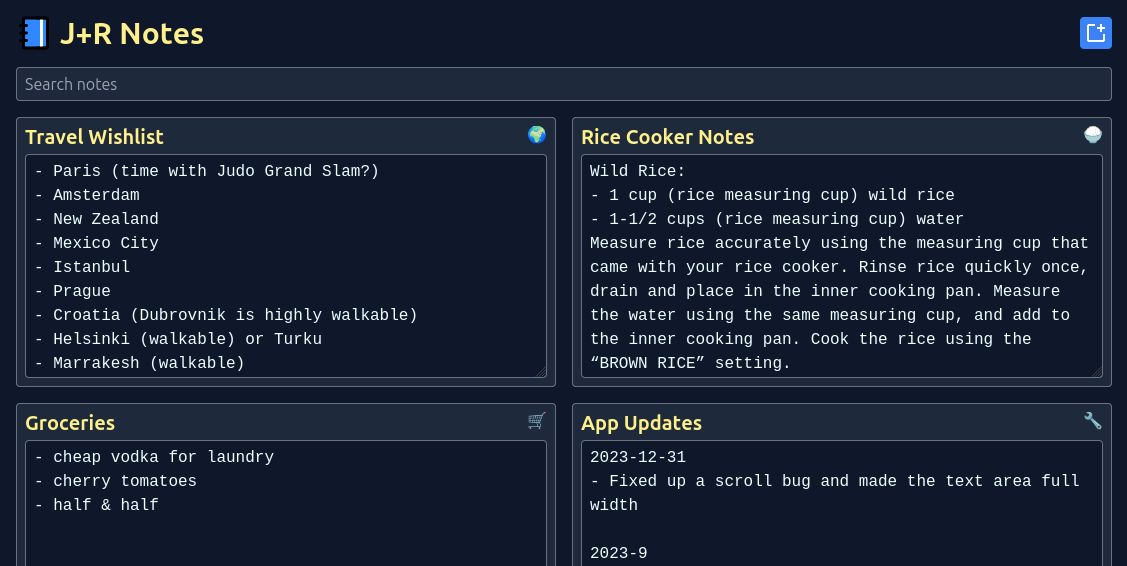

For those of you who aren’t familiar, Automerge is a neat library that helps with building collaborative and local-first applications. It’s pretty cool! I work on a collaborative notes application that does not handle concurrent edits very well, and Automerge is one of the main contenders for improving that situation.

I gave Automerge a try in 2023 and wasn’t able to get it working, to my chagrin. This weekend there was an event in Vancouver for local-first software with one of the main Automerge authors, so I decided to attend and give it another try. I made a fair bit of progress, but ultimately gave up after spending ~5 hours on the problem. A few thoughts+observations:

I am going off the beaten path (web)

Automerge’s “golden path” is web apps. The core of Automerge is written in Rust, but it’s primarily used via WASM in the browser.

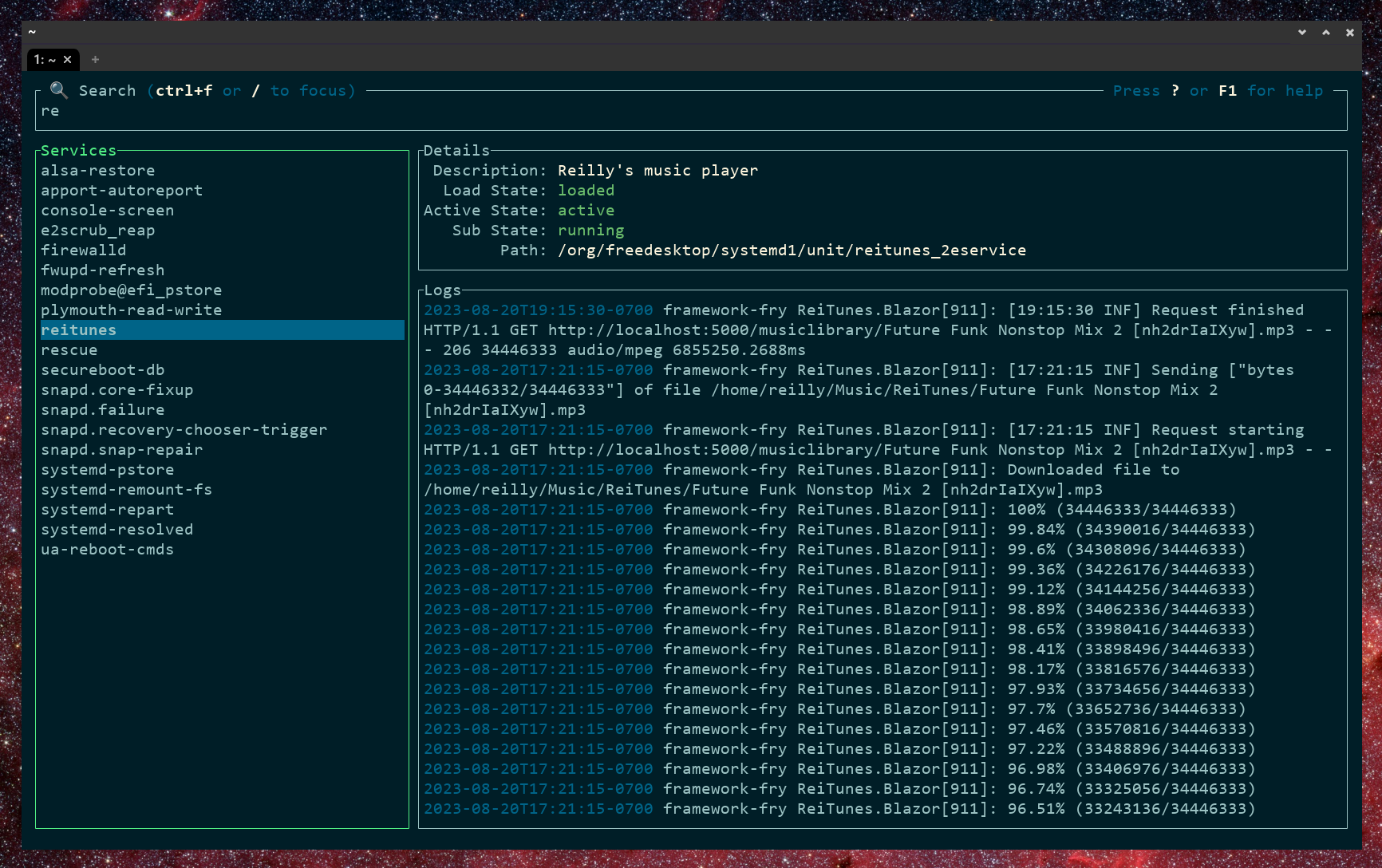

This approach is unpleasant for me; I like Rust, I have a good understanding of how code runs+executes on a “real computer”, and I do not want to write an application where 99% of the business logic runs in the browser. Instead, I tried to write an application where my Rust backend was the primary Automerge node and browser/JS Automerge nodes would talk to it.

This did not go well; the documentation and ergonomics of the Rust library are lacking, and most tutorials assume that you are using the JS wrapper around the Rust library. And then when I tried to use the JS version in my simple web UI, the docs assumed a level of web development sophistication that I don’t have.

To be clear, this is mostly a me problem: primarily targeting the browser is absolutely the way to go in 2025!

I am going off the beaten path (local-first)

Automerge tries to solve a lot of problems related to local-first software. But I wanted to “start small” and solve the problem of concurrent text editing for an application that isn’t local-first. In retrospect this was a mistake; the documentation was written for a very different audience than me, and I wasn’t especially aligned with what other people at the event were building.

Chrome is winning

Something that was discussed at the event: if you are building entirely in-browser local-first applications you may want to target Chrome, because Firefox is way behind on several new+useful APIs. This is sad, but not surprising.

What next?

I think it’s possible to build an Automerge-based collaborative text editor the way I want, but it’s a lot harder than I expected. I’m going to shelve this and revisit it next time I have time+energy to hack on it.

. But until it settles down a bit, expect some growing pains.

. But until it settles down a bit, expect some growing pains.